Gallery of Chatbot Related Images with AI-Generated Commentary

Note: these images were created by first prompting a chatbot to create a list of prompts that were subsequently used in image generation. Note: All of the commentaries on this page are also AI-generated!

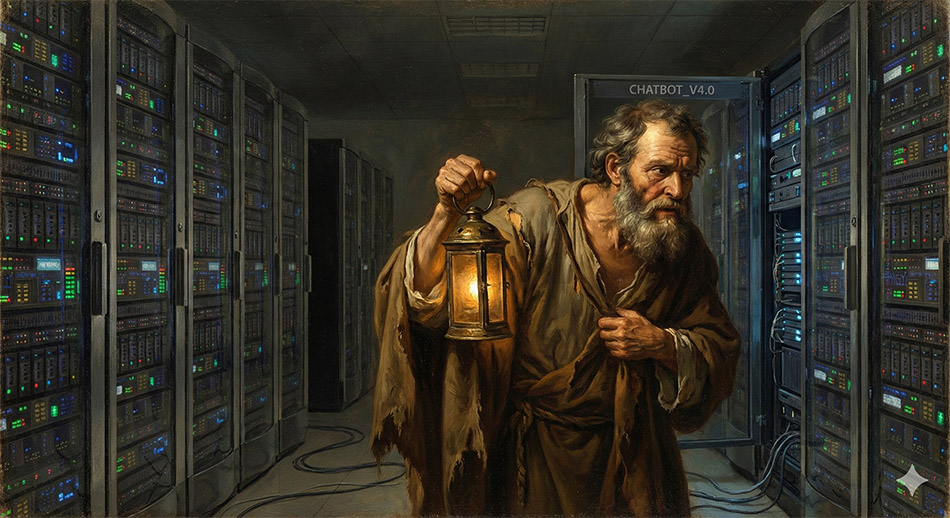

“Diogenes of Sinope, the ancient Greek Cynic philosopher, is famous for his ascetic lifestyle and his deliberate acts of public disruption meant to expose the irrationality of societal customs. His most famous exploit involved carrying a lit lantern through the streets of Athens in broad daylight, peering into the faces of citizens and claiming to be searching for a single “honest man”.

In the context of modern LLMs, this historical anecdote provides a perfect satirical framework. LLMs are notoriously prone to “hallucinations”—fabricating plausible, highly confident, but entirely false information. Because the system relies on statistical pattern recognition rather than factual databases, the AI has no internal concept of truth or honesty. Diogenes, therefore, serves as the ultimate humorous metaphor for a frustrated modern user trying to extract verifiable, factual truth from a generative text model”

The humor in this prompt relies on the extreme juxtaposition between the classical, organic antiquity of the philosopher and the sterile, geometric hyper-modernity of the AI data center. The instruction to use chiaroscuro (strong contrasts between light and dark) focuses the viewer’s eye directly on the lantern and the server, emphasizing the epistemological search for truth amidst the opaque processing of neural networks

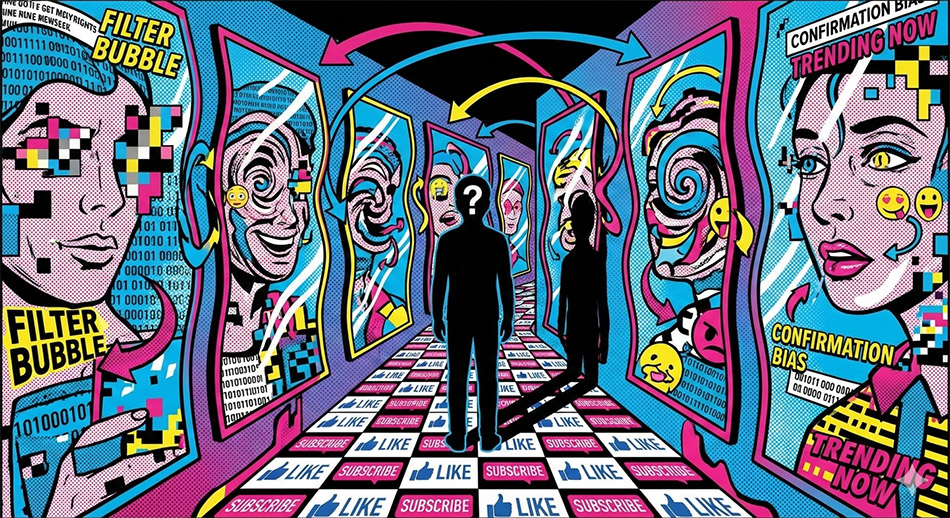

Chatbots reflect, refract, and sometimes distort human language and societal biases back to the user. Because generative AI models are trained on vast datasets scraped from the internet, they inherently encode the biases, discrimination, and specific cultural viewpoints present in that data. The AI does not provide an objective, mind-independent truth; rather, it provides a statistical reflection of humanity’s digital footprint.

The surrealist pop-art style highlights the modern, commercial nature of AI training data. By showing the reflections as fragmented text and pixels, the illustration visually communicates the epistemic risk of treating AI as an oracle. The viewer is reminded that the AI is not generating novel truths, but rather reflecting the user’s own inputs mixed with the distorted aggregation of historical data

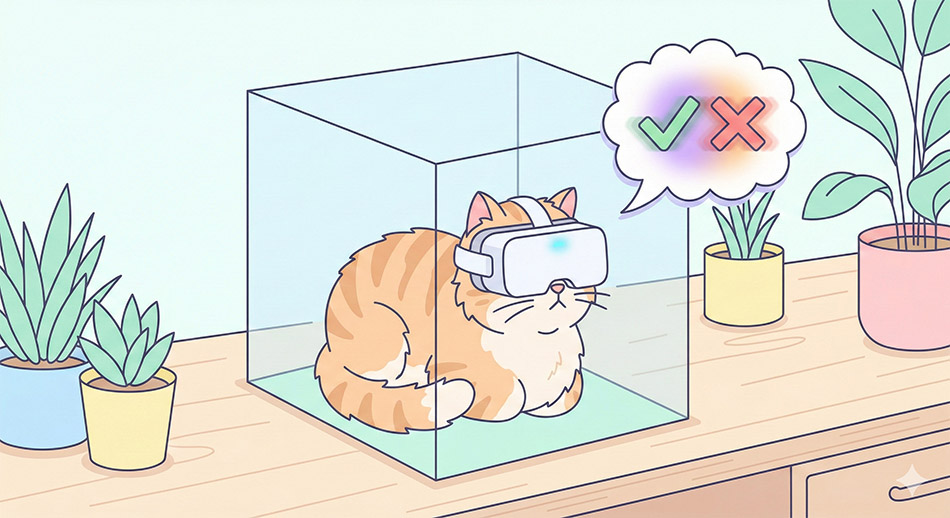

The Superposition of Truth and Hallucination: Austrian physicist Erwin Schrödinger proposed his famous “cat in the box” thought experiment in 1935 not to validate quantum mechanics, but to mock the absurdity of the Copenhagen interpretation. The experiment posits that a cat placed in a sealed box with a radioactive trigger exists in a simultaneous state of quantum superposition—both alive and dead—until the box is opened and observed.

In the philosophy of AI, this concept maps flawlessly to the state of an LLM prior to generating an output. When a user submits a prompt, the neural network calculates millions of probabilities for the next word. Before the mathematical “softmax” function collapses this vast probability distribution into a single selected token, the chatbot’s impending answer exists in a superposition of absolute factual truth and complete, unhinged hallucination.

The use of clean vector art and minimalistic outlines ensures the image remains accessible and illustrative, preventing the complex quantum concept from becoming visually muddy. The visual of the overlapping checkmark and X effectively and humorously communicates the probabilistic uncertainty inherent in generative AI systems

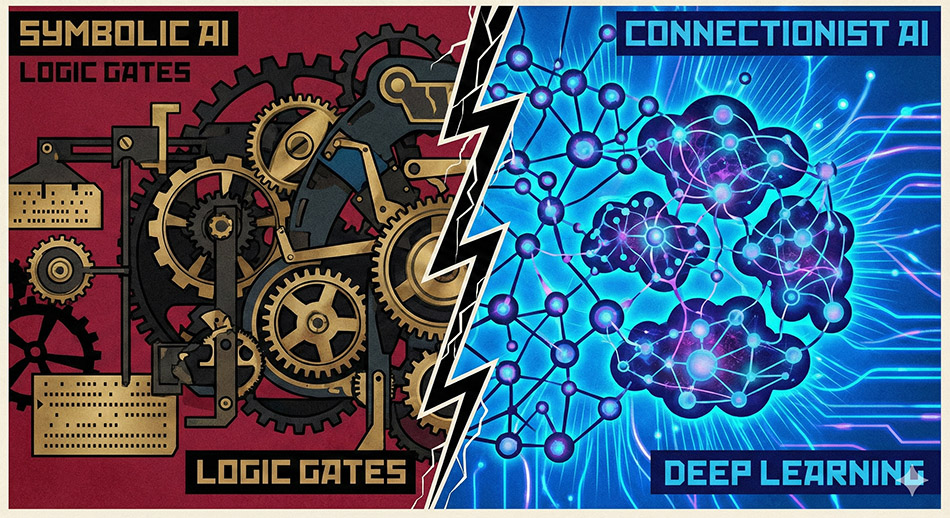

A striking constructivist art illustration representing a scientific paradigm shift in artificial intelligence. On the left side of the image, a rigid, highly structured mechanical clockwork mechanism made of iron and brass represents classical symbolic AI. In the center, a dramatic fracture splits the canvas. On the right side, the mechanical gears dissolve into a fluid, glowing, organic network of interconnected neural pathways and vibrant data clusters, representing the connectionist deep learning paradigm. Bold geometric shapes, stark contrasting colors of crimson, black, and electric blue, high visual tension, conceptual. The constructivist style, known for its bold geometric forms and industrial themes, perfectly captures the structural nature of scientific paradigms. The visual dissolution of gears into neural pathways provides a direct, intuitive understanding of how the foundational philosophy of machine learning has evolved from explicit programming to emergent, statistical learning.

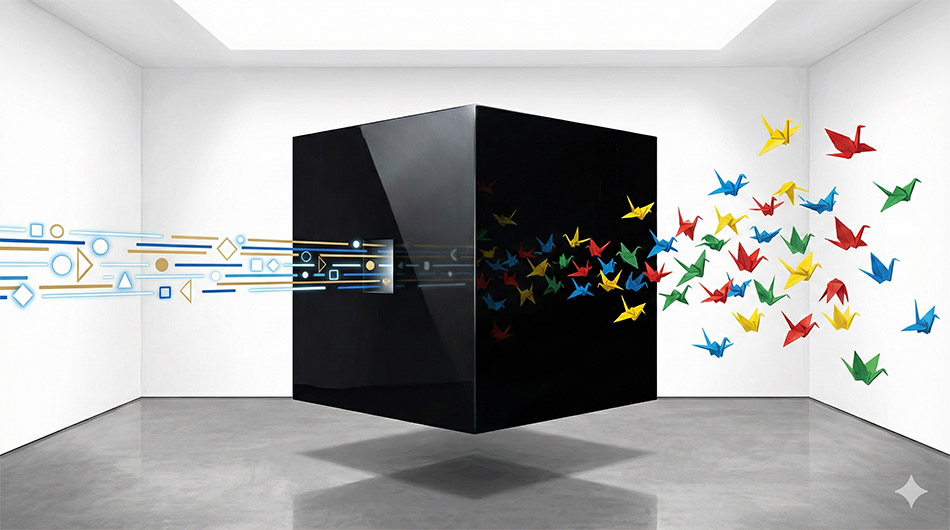

One of the most pressing issues in the philosophy of science regarding artificial intelligence is the “black box” problem. This metaphor, which dates back to the early days of cybernetics, describes a system whose internal processes are entirely opaque to users.

Modern LLMs and deep learning systems, which can contain billions or even trillions of parameters, function as impenetrable black boxes. While a user knows the input (the prompt) and the output (the generated text), the probabilistic transformation that occurs across the neural network’s hidden layers is so massively complex that no human programmer can explicitly trace the exact reasoning chain. This opacity presents severe ethical and legal challenges.

If an autonomous system makes a catastrophic mistake—such as an AI-driven vehicle causing an accident or a medical AI misdiagnosing a patient—the black box nature of the system prevents investigators from understanding exactly why the decision was made. This lack of diagnostic capacity undermines trust and complicates the establishment of legal accountability.

The impenetrability of the black box is fundamentally tied to the concept of computational irreducibility—the philosophical stance that some systems are so intricate that no shortcut exists to predict their behavior; the only way to know what the system will do is to run the full computational process.

René Magritte’s specific brand of “realist Surrealism” presents everyday or geometric objects in highly unusual, logically impossible contexts to challenge the viewer’s perceptions of reality. The visual transition from perfectly ordered input data to chaotic, emergent output perfectly encapsulates the black box problem. It visually asks the philosophical question: what transformative, opaque mechanism lies within the monolith?

The journey of language through a Transformer begins with Tokenization, where input text is broken down into smaller, manageable units (words or subwords). These tokens are entirely meaningless to a machine until they undergo Text Vectorization, where each token is mapped into a high-dimensional continuous space via an Embedding matrix.

In GPT-2, for example, every token is represented as a 768-dimensional vector, allowing the model to assign semantic meaning based on spatial proximity (e.g., words with similar meanings are located closer together in the 768-dimensional space).Because Transformers process all tokens simultaneously rather than sequentially, the model possesses no inherent sense of word order. To solve this, Positional Encoding is injected into the embeddings. Using a combination of sine and cosine functions of different frequencies, the model adds a unique positional vector to the embedding, mathematically stamping each word with its location in the sentence.

Named after the 14th-century French philosopher Jean Buridan, this thought experiment ridicules deterministic philosophy. It imagines a perfectly rational donkey placed precisely midway between two identical bales of hay. Because the donkey lacks free will and always acts in the most strictly rational manner, it cannot find a logical reason to choose one bale over the other, and thus starves to death in a state of paralysis.

When applied to artificial intelligence, Buridan’s Ass satirizes the lack of free will and true creativity in machines. If an AI is presented with two statistically identical probabilistic pathways, without the injection of a random “temperature” parameter to force a choice, the deterministic algorithm would theoretically freeze.

By replacing the hay with server racks and the biological donkey with a robot, the classical paradox is instantly updated for the informatics age, illustrating the limits of purely rational, non-conscious systems.