5.5.5 Pragmatic View of Chatbots: Part 5

Just a Tool, Not a Brain?

When computer scientists cleverly use the term ‘artificial neural network’ to describe part of the architecture of chatbots, they are merely using an abstract computational metaphor. The machine or its software does not directly mimic the interaction of neurons in the central nervous system of mammals. These networks could equally well have been called node-based, or nodal systems. None of the physical parts that make up these computing systems have any proven direct physical resemblance to any form of biological structure, including neurons. The words neural, neuron, (and perceptron) function within computer science as abstract metaphors that are very far removed from anything biological, including networks of neurons. Although the logic gates of computers are physically distinct from the biological neurons of the human central nervous system such as the pyramidal cells, granule cells, basket cells and the chandelier cells, it has been argued from electrophysiological studies that even parts of a neural dendrite can implement the biological equivalent of logical operations (see source). We also know that biological neurons are capable of generating excitatory and inhibitory signals that control the output of cells to which they are synaptically connected. Feedback loops operating with the brain are therefore extremely plausible (see the diagram below). There are even thought to be neuronal microcircuits within the cortex. It even seems that individual neurons can mimic extended parts of artificial neural networks. We cannot reasonably doubt that it is the connections of neurons of the central nervous system which endow us with linguistic abilities. Precisely how this occurs is as yet unknown. However, significant process has been made in determining regions of the brain which are involved in language processing (see this video of a conference plenary lecture from 2026). Empirical evidence from neuroimaging methods suggests that we have embodied cognition. No such possibility exists for a chatbot. In 2023, an international team of neuroscience researchers concluded that “that motor and perceptual information comprised in language representation rely on brain resources partially overlapping with motor and perceptual experience” (see source). Differences in brain activation patterns have been made between left-handed and right-handed people when action words are encountered (see source). Interestingly, in that study it was concluded that the activations of “the lexical decision task was not due to conscious mental imagery, but rather to lexical processes, per se”.

In short, chatbots are conceptually and physically distinct and separate from humans, even though they can output large amounts of fluent text that in the past we would only have imagined could be the result of biological intelligence. Indeed, it is rather unfortunate that we use the term artificial intelligence rather than Machine Learning. The word ‘artificial’ is, I strongly suspect, likely to be underplayed in the minds of most people and so help create the illusion that such systems are ‘intelligent’ rather than computationally very impressive. Intelligence is mimicked by computation processes during the generation of text.

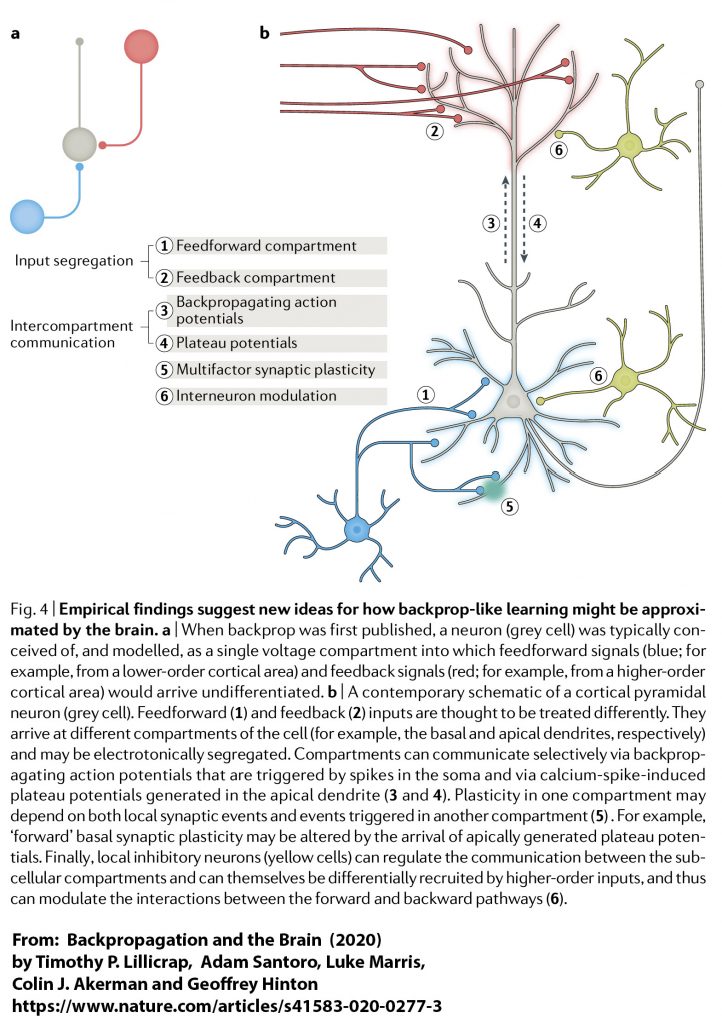

Even if we were to view the central nervous system as being capable of computation from an abstract information theory perspective, there are very significant differences between computers and biological tissue. Transformer architecture is very connection dense from a mathematical perspective. Each node in ‘layers’ of the network is coupled to every node in the adjacent layers. By comparison, the human brain is sparsely connected, especially over long distances. Although there are certainly feedback circuits in the human brain, there are not thought to be the equivalent structures to support the ingenious backpropagation of error signals that contemporary chatbots use in ‘training’. Back propagation ripples backwards from the error at the output through the entire network in order to ‘tune the vectors’. Nevertheless, Hinton and colleagues have proposed that there could be mechanisms in the brain that are mimicked by generative AI systems (see the diagram above). However, the fact that the entire human brain is not thought to consume more than 20 watts of power is a strong indicator that biological evolution has produced a significantly more efficient system for processing language than the power hungry GPUs, data bus operations, and memory systems within computers.

Jonathan Birch and colleagues, in their very useful commentary on Unlimited Associative Learning (UAL) in animals say it “is the capacity for associative learning on novel, compound stimuli, with the potential for second-order conditioning and trace conditioning, allowing for the open-ended accumulation of long chains of associative links during an animal’s lifetime. It includes the ability to bridge temporal gaps: to learn about conditional stimuli that are no longer present. UAL is posited to be a natural cluster—a cluster of enhanced learning abilities that are closely linked”. One of the key phrases here is ‘compound stimuli’. It is obviously legitimate to see text as a human ‘stimulus’ in all of those individuals who have learned to read at least one language. However, human life goes way beyond the very limited stimulus of text and the richer value of language, as we all know so well. To reduce human understanding to only the contemplation and generation of speech and text would be an act of extreme folly. Of course, that is not what Hinton is advocating.

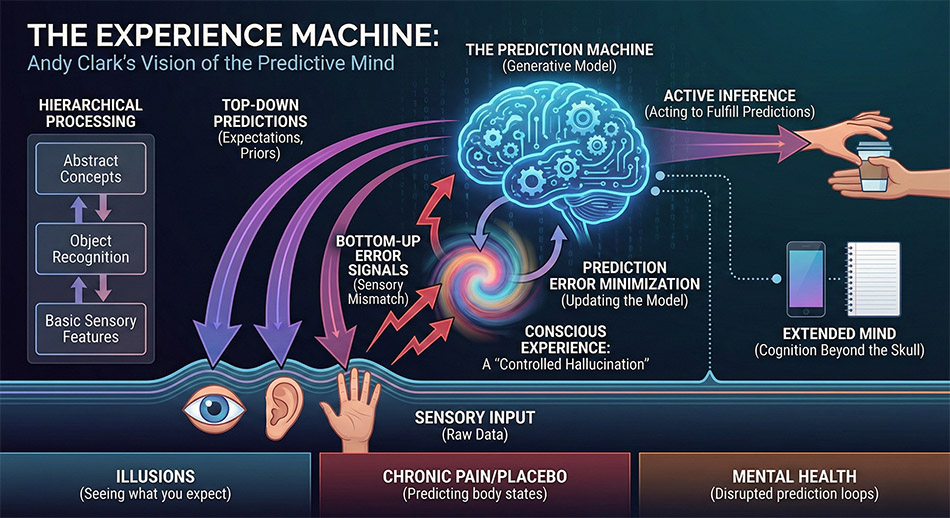

There are others, such as Andy Clark, who make even richer associations between generative AI systems and the human brain and its associated sensory apparatus. Clark views the brain as a computational machine capable of making predictions that drive behaviour and sustain survival (see this video of him lecturing on the topic). From Clark’s perspective, as we develop, we learn to make generative predictions about the world and are constantly comparing predictions with perceptions.

An AI-generated infographic explaining Andy Clark’s book:

The Experience Machine: How Our Minds Predict and Shape Reality

For the present generation of chatbots, there is no external experiential world, only text. These systems merely play a language game of word association. Indeed in English, although less so in some other languages, it is the relative positions of words within properly formed text that helps to drive the human input and the machine output. In the machine output, there is nothing more than a probabilistically calculated sequence of numerical tokens converted back to words. By contrast for us, as embodied creatures, there are internal experiences, emotions, aspirations, and feelings and also external worlds which text can symbolically represent, albeit imperfectly and fallibly. There are researchers in AI such as Yann LeCun who feel that some kind of ‘world model’ and hybrid neuro-symbolic architectures that are yet to be developed, which will facilitate new developments in robotics. While that is probably accurate, my intuition is that ‘world models’ are not a cure for the errors which chatbots generate in very abstract non-concrete areas of language use. For example, no amount of physics will inform some of the higher order abstractions encountered in the philosophy of science, never mind the very complex biological and social world that we inhabit.

Version 1.2