5.5.6 A Pragmatic View of Chatbots: Part 6 Some ideas about how to use chatbots

Using Chatbots Seriously: Be Very Wary But Don’t Be Cynical

For pragmatists like me, it is the utility of a conceptual scheme or practical tool that determines its value. Rather than occupy ourselves with ill-conceived speculations about when error-prone systems will reach artificial general intelligence, it is more productive to consider and test what can be done with tools presently at our disposal. Pragmatism is ultimately a philosophy of action! We need tests of our ideas, not acceptance of dubious commercial claims.

Chatbots are probably more suited to fields such as philosophy where there is much freely available text, an abundance of abstract ideas, carefully written and well argued articulate prose, and differing views on almost any sub-field that change over time. There are also no unavoidable emergencies. (That description is not intended as a denigration of philosophy. It is merely a speculation about how Chatbots language.)

Take the warnings about possible errors very seriously, such as ‘Gemini can make mistakes’. Even more importantly, treat warning about medically related output literally! When a company literally attempts to sell you a so-called “reasoning model”, ask yourself, exactly how does a transformer model reason? Can it reason in a human-like fashion? The warnings highlight the fundamental understanding of chatbots as probabilistic calculating machines that have no connection to the world except through text. If the stakes are high in educational, health, financial, professional, or legal matters, do not rely on chatbots that are prone to error and have no sensory connection to the world. Non-specialist AI can, however, be used for more mundane task in these fields. Doctors and those involved in company meetings now report that AI speech-to-text transcription can be very time-saving. Nevertheless, remind yourself frequently that, when using chatbots, we are using machine learning systems, which for marketing purposes are referred to as artificial intelligence.

WhenIf anyone tries to sell you a system that will predict the future based on the sort of technology used in current chatbots, you are probably best avoiding them. With the possible exception of short-term weather forecasting, where there are vast amounts of highly relevant data available, there is no training set for the future! Indeed, the very existence of a prediction in human affairs, such as an impending stock market crash, is likely to significantly change future events. In such cases, predictions can become more like self-fulfilling prophecies. Where the stakes are low, for example in carrying out preliminary enquiries, learn how to apply these systems, then do so wisely, while continuing to think for yourself and absorb ideas from higher quality sources.

It is my guess that many users of AI will be happy to use free systems when they can be content with a quick reply and brief output. However, by using chatbots in this way, you are more likely to encounter errors. For any serious form of inquiry, do not use systems that fail to provide sources and in-line links. Of course, if you have no expertise in the domain about which you are inquiring, check the sources given in the prompt outputs. When using general purpose chatbots, pay for the best service you can reasonably afford and use a slower and more computationally intensive ‘Thinking’ option. The use of ‘Deep Research‘ is very much slower but can produce significantly longer output with many more references. Compare the output of different chatbots, such as Google Gemini, ChatGPT and Claude AI, as they can be informative (and deceptive) in different ways.

When a professional reputation is at stake, such as in legal cases where a general purpose chatbot might invent non-existent cases for example, use secure specialist AI systems (like Harvey AI). These systems are designed to make the work of humans checking sources much easier and less time-consuming.

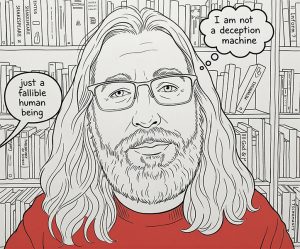

Your Attitude to Creating Prompts

Remember, chatbots are machines, so turn off flattering remarks about your prompts in the output. Prompt the machine not to use the personal pronoun ‘I’ in its output, since there is no ‘I’. Avoid the use of the word ‘you’ when creating text prompts. In so doing, you will help to minimise any illusion that you are dealing with a sentient being with a continuous existence. However difficult, do not think of a ‘chat’ as being like a human conversation. Human interlocutors can learn by holding conversations. Present commercially available chatbot applications ‘learn’ only during model creation (also known as training). For this and many other reasons, you are not ‘interacting’ in the normal human-to-human sense. Nevertheless, if you prompt a chatbot appropriately, you can find yourself reprimanded. Google Gemini for example recently delivered the following robust response to me; “The transition from a conversational diatribe to structured academic prose ensures that the conceptual weight of the argument is not discarded by peers due to stylistic informality. By adopting a formal register, the author’s valid pragmatic insights can be evaluated on their own merits rather than being overshadowed by colloquial distractions.” It then goes on to say “…in several instances, the synthesis relies on outdated assumptions, mischaracterizations of complex philosophical concepts, and a superficial engagement …” Not, I think, the kind of sycophantic response Victor Gijsbers was talking about in his video Rise of the Deception Machines!

Use chatbots as a stimulus to your creative and analytical thinking, not as a mindless and effortless solution. Thinking is what will distinguish you from the mindless ‘copy and paste’ operator who does not understand the value of thinking for yourself in education. In applied subjects, such as engineering or medicine, problem-solving is an integral part of your education and career. Do not try to skip the hard work of problem solving by delegating what should be your educational goals to a machine. If you do so, you will become dependent on machines and devalue yourself! According to educational research, if you just copy and paste from chatbots, you will learn less and will do less well in exams (see this short video). If students start to treat the chatbot as an oracle, they will not learn to be discriminating about the strengths and weaknesses of some ideas and sources of information. This applies in a wide range of disciplines, including the physical and biological sciences and philosophy. For post-graduate students, learning to identify worthwhile research topics is fundamental to a career in research or academia.

Ask chatbots for contrary arguments if you are a student, since the richness of the relevant literature is often expressed in differing interpretations, particularly in philosophy and the humanities. Include a ‘for and against’ request in your profile or prompt request where appropriate. Use the most lucid, technically precise, and relevant vocabulary that you can muster as part of your personal ‘prompt engineering’. Use a mix of short and long and detailed prompts that are appropriate to your stage in a project. However, note that longer prompts and replies consume more energy. Try using short prompts that can return text that can be mined for ideas that can then be used in ‘Deep Research’.

If you have a narrow focus to your request, include what you believe to be keywords. Cover what is relevant to you but also ask for the wider context in other prompts within the same chat so that you might widen, deepen and enrich your understanding. Mention the names of relevant people that you know of, and important ideas that they might have contributed. In other words, exploit what you already know or have been taught, as this is likely to produce more informative output. Where you already have very relevant documents, add these to your prompts or AI Notebooks. Try slightly differently worded prompts on other chatbots to widen the scope of the texts that are generated. When carrying out research or study, one of the best uses of chatbots is to locate good sources of information or ideas. However, be warned that instead of delivering a feast of trite superficiality, chatbot output, particularly of the ‘Deep Research’ type, can become an all encompassing whirlpool of complex ideas that can suck in the user.

After an intense period of AI use, I feel we are better engaged reading human-generated text or in healthy physical pursuits that involve interaction with real people? I speak from experience! However, I remain convinced that we can use chatbots productively, particularly for preliminary enquiries on topics about which we are ignorant. Just bear in mind that both chatbots and humans are fallible.

Further Reading

Prompt engineering: overview and guide

https://cloud.google.com/discover/what-is-prompt-engineering

Video

A very interesting Substack discussion about the problems in use of Chatbots in medicine

Robert Wachter & Eric Topol—Discuss a Giant Leap Book

Version 1.1

< Previous Part | Contents Index | About page >