5.5.4 A Pragmatic View of Chatbots: Part 4

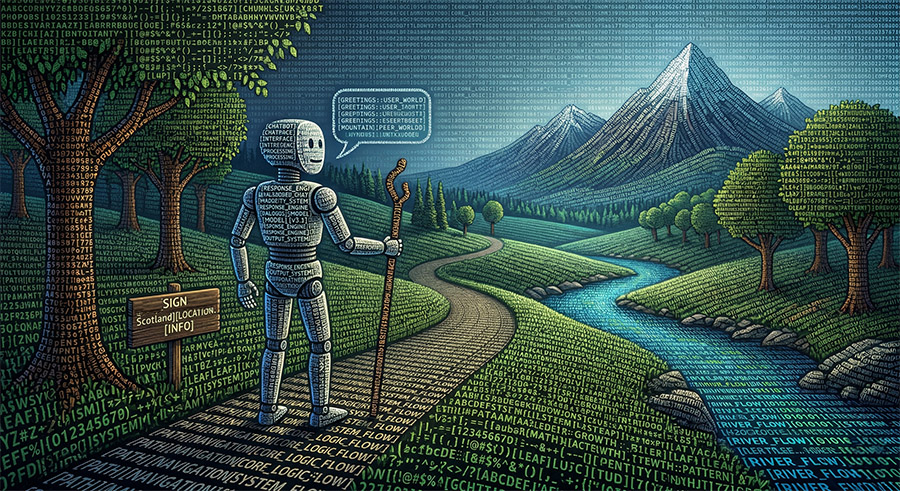

The Text-World of Chatbots

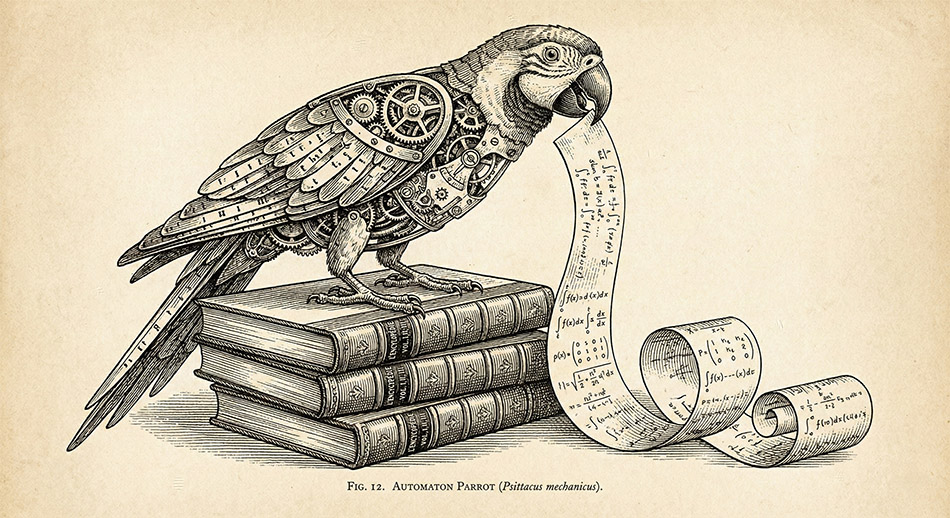

Some AI researchers, such as the Nobel prize-winner Geoffrey Hinton, are now arguing that large language models show ‘understanding’ in a way that is directly analogous to human comprehension (see this video from 2025). I prefer to think of them, in the amusingly memorable words of the computational linguist Emily Bender and her colleagues, as very sophisticated “stochastic parrots”. From this viewpoint, the ‘Chatbot parrots’ are reassembling human-generated text patterns in a fantastically complicated game of word association in which the input words are being associated with output words. Like all metaphors, the ‘parrot’ idea should not be stretched too far, since the chatbot does not store or copy and paste text segments from the source material. ‘Parroting’ in this sense merely means using words without having a meaningful association outside the text. For humans, many words can be associated with features of the external world that only a sentient creature can perceive. Other words are associated with states of mind that we call emotions, feelings or urges, which machines lack. We use words to describe previous states of the world and to make sensory or mechanistic predictions. However, we at the same time constantly make predictions about the world without using any linguistic terms. In addition, we can also appreciate that words can be metalinguistic, that is, descriptive of language and symbols. Machines have none of these characteristics. Conversely, pre-school children do not rely on statistical measures of word associations. They have no need of numerical tokens when using words. Normalised numerical parameters which are used in dot product calculations, and which therefore form part of the basis of current chatbot function, do not even seem to have a proven algorithmic connection with brain function.

The Stochastic Parrot (Psittacus mechnicus)

[ Apparently Google Gemini can make jokes in Latin 🙂 ]

Hinton seems to believe that chatbots ‘understand’ because these systems can generate fluent text streams that are often conceptually coherent over more domains of knowledge than a large group of people could acquire in many lifetimes. My intuition is that we should not equate fluent text production by a machine with human-like comprehension. We should also be careful to distinguish fluency of bot output from incisiveness, usefulness, applicability, and accuracy. The modern chatbot is clearly a master of syntax.

However, the more interesting philosophical issue is, are the bots also masters of semantics? The mature Ludwig Wittgenstein would have had us believe that meaning can be understood merely in terms of use when he invented the idea of language games. If that were indeed the case, you might indeed think that chatbots tend towards mastery of semantics. However, you will also have to accept that long strings of numbers, with no connection to the world, can also encode meaning. Meaning for a human is embodied in an incredibly complex biological machine that is more complex than a fully equipped nuclear-powered aircraft carrier (probably one of the most complex systems created by humans).

I believe that a crucial part of language learning in children involves the use of ostensive definition, sensory experience, and biological embodiment in the world. (Also see my previous remarks.) I therefore do not subscribe to the view that meaning is use.

A more pragmatic way to view the chatbot is as a text generating machine that can indeed act as a tool to generate erudite text ‘games’. It might also help to consider the Chinese Room argument proposed in 1980 by the philosopher John Searle and summarised, as follows, in the Stanford Encyclopedia of Philosophy:

“Imagine a native English speaker who knows no Chinese locked in a room full of boxes of Chinese symbols (a database) together with a book of instructions for manipulating the symbols (the program). Imagine that people outside the room send in other Chinese symbols which, unknown to the person in the room, are questions in Chinese (the input). And imagine that by following the instructions in the program the man in the room is able to pass out Chinese symbols which are correct answers to the questions (the output). The program enables the person in the room to pass the Turing Test for understanding Chinese but he does not understand a word of Chinese”.

For me, there can be no linguistic meaning without a physical world. The chatbot’s ‘world’ is a gigantic store of vectors encoded as domains of charge in silicon or stored electro magnetically as binary bits.

Hinton might be confusing novel and much more versatile ways to store and retrieve information with human embodied knowledge of the world and our abstract understanding. However, if human memory and understanding involves some kind of associative learning, particularly when abstract concepts (like epistemology) are encountered, Hinton might indeed be making a legitimate metaphoric or algorithmic connection. (see my previous comments on human reasoning). Indeed, the announcement of the Nobel Prize committee specifically mentions ‘associative memory’ in relation to the dynamical Hopfield Neural Network of 1982 which is an early precursor of today’s Transformer Models.

Version 1.2